The Age of Auto Research, and Other Certainties

Over the past few months, terms like auto research, AI scientist, and agentic discovery have moved quickly from technical circles into the broader conversation around research. What has spread even faster, though, is a familiar narrative: AI will replace researchers.

That narrative is easy to amplify. It fits media headlines, the broader cultural mood around automation, and a style of online discourse that treats each improvement in AI capability as evidence that another human role is about to disappear. So the question keeps returning: if research itself can be automated, what is left for the researcher?

The concern is understandable. For a long time, people assumed AI would first take over the peripheral labor around research: formatting, retrieval, polishing, reproduction, and organization. But once it begins to enter the research process itself, the issue is no longer just efficiency or better tools. It becomes a question about role and identity: if a system can assist research and also perform more and more of its key steps, what exactly remains distinctively human in research?

And yet that question rests on a misleading premise. It imagines research as a world in which all problems already exist objectively, waiting to be solved one by one by a stronger optimizer. But that is not how research actually works. Many important problems do not surface automatically. They must first be noticed, named, and sustained by researchers before they can enter collective view.

From that perspective, auto research may not be a crisis of replacement at all. It may instead be one of the quiet fortunes of our time: a chance to free researchers from lower-level search and return their attention to a higher-value role.

What auto research is actually good at

The first thing we should admit is simple: auto research is powerful, and it will only become more powerful.

Automated systems can already search at scale under fixed objectives, iterate under explicit evaluation criteria, and optimize efficiently within closed problem spaces. Their strengths are especially obvious in tasks like parameter search, strategy search, candidate generation, and experimental loops.

task, metric,

boundary"] --> A1["Read

context"] H2["Human setup:

prompt, code,

data"] --> A1 subgraph L["AI agent: local search loop"] direction LR A1 --> A2["Propose

change"] A2 --> A3["Run short

experiment"] A3 --> D{"Better?"} D -- yes --> K["Keep"] D -- no --> R["Revert"] K -. "next

iteration" .-> A1 R -. "next

iteration" .-> A1 end

Figure 2. AutoResearch's Workflow

Karpathy’s autoresearch is a particularly clean example. Its core loop is simple: an AI agent modifies code, runs a short experiment, checks whether the result improved, keeps or discards the change, and repeats. What matters is not just that it works, but that the setup is explicit: the task is bounded, the metric is fixed, the search space is constrained, and experiments are directly comparable.

That is exactly where today’s strongest auto research systems shine: inside a well-defined local loop.

But that phrase matters—when the problem is well specified.

It already assumes something crucial. The problem has been defined. The objective has been given. The evaluation criteria already exist. In other words, the strength of auto research emerges inside a problem space that is already there.

These systems are powerful at solving known problems efficiently. That is real. But it is not the same as addressing the scarcest part of research itself.

Research is not a giant question bank

The real world of research is not a giant question bank filled with problems waiting to be solved.

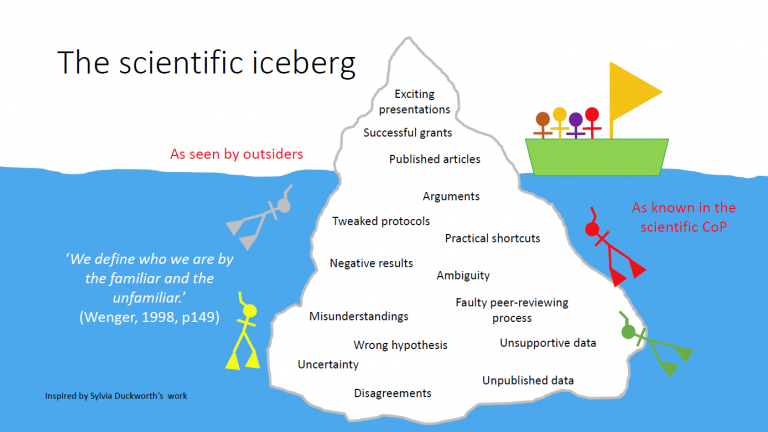

It is closer to an iceberg.

The visible part—the part with names, benchmarks, objective functions, and shared evaluation criteria—is only the part that has already surfaced. Beneath it lies a much larger structure: vague intuitions, unresolved tensions, neglected anomalies, changing relevance, emerging tools, and the slow process by which a field learns to see something as a problem at all.

That is what many current discussions miss.

Research problems do not simply present themselves. Whether something becomes a problem depends on whether researchers notice it, whether a community comes to recognize its importance, whether new tools make it legible, and whether someone is willing to stay with it before it looks mainstream, safe, or obviously productive.

Research is not only about solving problems. It is also about deciding what deserves to become a problem in the first place.

Many questions that later seem obviously important did not begin with a stable benchmark, a clear name, or a shared evaluation standard. They began as vague unease: something in the current picture did not quite fit; an existing paradigm failed to explain a crucial fact; a neglected corner turned out to constrain the whole system; or a once-marginal question became newly important because the world, the tools, or the science had changed.

At moments like these, the problem does not surface on its own. It first has to be seen.

And that leads to a deeper point: not every problem worth solving will automatically enter research attention. If no one notices it, names it, and stays with it, it may never become something systematically pursued. It will not automatically acquire a benchmark, an objective function, or a form suitable for auto research.

What is scarce in research, then, is not only compute, data, or methods. It is attention itself.

What researchers actually do

If a problem never enters view, it is unlikely to be solved at all.

So what makes researchers truly hard to replace is not, first and foremost, their ability to solve problems. It is their ability to bring problems into view.

This is not to downplay solving. Solving matters. So do experiments, implementation, validation, and iteration. But the deepest value of a researcher lies not simply in solving an already defined problem faster. It lies in bringing into collective attention those problems that are still inarticulate, undervalued, or not yet fully visible.

That ability is hard to benchmark. It includes a feel for importance, a sensitivity to real bottlenecks, a sense for what is merely fashionable and what may matter in the long run, and the ability to shape something vague and unstable into a problem that can actually be discussed and pursued. It also includes a willingness to take responsibility for a problem before it is mainstream.

This is why I do not find “researchers will be replaced” a very convincing claim. That claim assumes the researcher is essentially a slower, more expensive solver. But if we understand researchers instead as discoverers of problems, judges of significance, and setters of direction, then stronger auto research does not create a simple replacement relationship. It accelerates the parts of research that are already clear, searchable, and standardizable.

The higher-level questions—what deserves study, what should enter the problem space, and what is worth sustained commitment—do not disappear as lower-level solving gets cheaper. If anything, they become more important.

Because when “how to do it” becomes cheaper, “what to do” becomes more valuable.

Why this may actually be good news

From this angle, auto research may not be a replacement crisis at all. It may be a reclarification of the division of labor.

If automated systems can absorb forms of research labor that are naturally enumerable, searchable, and standardizable, that is not necessarily bad news. In many cases, it is a liberation. Researchers were never meant to spend most of their time trapped in lower-level search, repeated trial and error, and mechanical iteration. Those layers consumed so much effort not because they defined the value of research, but because our tools were not yet good enough.

The autoresearch example makes this especially clear. It does not describe a world in which the human researcher disappears. It describes a world in which the human stops hand-editing every experiment in the usual way and instead operates at the level of framing: setting context, instructions, boundaries, and the basic organization of the loop. The automation takes over bounded experimental search; the human remains responsible for defining the task, defining the evaluation logic, and deciding what kind of progress is worth pursuing.

That is why I do not see projects like this as evidence that the researcher is vanishing. If anything, they suggest the opposite: the human role is moving upward. The researcher becomes less a manual tuner of every local experiment, and more a designer of objectives, constraints, evaluation logic, and direction.

Now that the tools are improving, many lower-level tasks can finally be absorbed automatically. What matters about this shift is not that it creates a dramatic sense that researchers, too, can now be replaced. It is that it forces us to ask a better question: if the parts that were always formalizable and automatable are gradually being taken over, where should researchers actually spend their time?

If auto research can absorb more of that labor, then what it offers is not the marginalization of researchers, but the possibility of redirecting attention toward what is more worthy of human effort: discovering problems, making judgments of value, building connections across fields, choosing directions, and taking responsibility for meaning.

The real adjustment researchers need to make

If that is right, then the real task is not to preserve old advantages, but to upgrade the role of the researcher.

If researchers continue to define their value mainly through lower-level execution, then stronger tools will naturally produce stronger anxiety. The comparison becomes immediate: who reads faster, writes faster, searches more broadly, tries more options, and moves more efficiently inside an already defined problem space. And that is exactly the layer automation is best at swallowing.

But the picture changes once researchers begin moving upward.

What becomes worth cultivating is not merely faster information processing, but stronger problem sense: the ability to recognize which absences deserve attention, to sense tensions a field has not yet fully noticed, and to keep asking not just “Can this be done?” but “Why is this worth doing?” and “What will remain missing if no one works on this?”

This is not anti-technology. It is a more mature use of technology. Let auto research handle large-scale candidate generation, lower-level search, and formalized iteration. Let humans handle framing, filtering, judgment, connection, and direction.

What needs to be protected, then, is not the old research advantage built through patience and stamina alone, but those capacities that remain much harder to standardize: a feel for the value of problems, judgment about long-term direction, sensitivity to real needs, the ability to connect different knowledge systems, and the ability to persist before consensus arrives.

A quieter way to think about this moment

The more I think about it, the less I see auto research as a crisis that deserves to be overdramatized.

It will certainly change research. It will compress many older advantages rooted in diligence, and it will restructure many established workflows. But that does not necessarily amount to bad news. More importantly, it pushes us to stop defining the researcher as a high-intensity information processor and process executor, and to recover a fuller view of research as a practice of attention, judgment, and problem-awareness.

The stronger the tools become, the more researchers should move upward—not by competing with them on lower-level process work, but by returning to the higher-value tasks of seeing problems, naming problems, deciding what deserves to enter view, and judging what merits long-term commitment.

After all, not all important problems surface automatically. Many can only be solved after they are first seen by researchers.

If auto research changes anything, perhaps what it changes is not whether researchers are still needed, but whether they can finally spend less time on how to solve a given problem and more time on which problems deserve to be truly seen.

In that sense, this is not a misfortune for researchers. It may be one of the quiet fortunes of our time.

Note: This blog was polished with the assistance of ChatGPT (GPT-5.4 Thinking).